Imagine you visit a web site and are instantly and automatically logged in.

Without filling in username and password in a login form.

Without filling the OpenID field and clicking 3 times.

Without clicking the button of your browser's autologin extension.

Without a single cookie sent from your browser to the server.

Yet, you authenticate yourself at and get authorized by the web server.

Yes, this is possible - with SSL client certificates. I use them daily to access my self-hosted online bookmark manager and feed reader.

During the last weeks I spent quite some time implementing SSL Client Certificate support in SemanticScuttle, and want to share my experiences here.

- A bit about client certificates

- Server side

- Adding client certificate support to your PHP application

- Links

A bit about client certificates

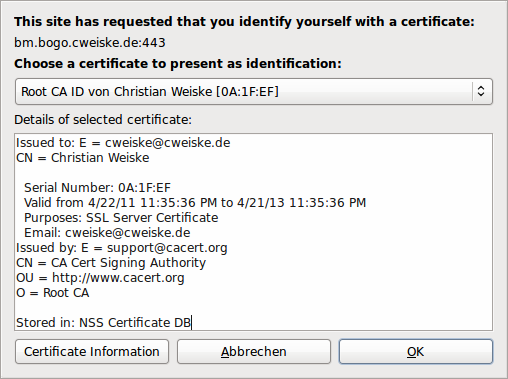

Client certificates are, as the name indicates, installed on the client - that is the web browser - and transferred to the server when the server requests them and the user agrees to send it.

The certificates are issued by a Certificate Authority (CA), that is a commercial issuer, a free one like CAcert.org, your company or just you yourself, thanks to the power of the openssl command line tool (or a web frontend like OpenCA).

The CA is responsible for giving you a client certificate and a matching private key for it. The client certificate itself is sent to the server, while the private key is used to sign the request. This signature is verified on the server side, so the server knows that you are really the one that the certificate belongs to.

Note that client certificates can only be used when accessing the server with HTTPS.

The certificates also have an expiration date, after which they are not valid anymore and need to be renewed. When implementing access control, we need to take this into account.

Server side

Your web server must be configured for HTTPS, which means you need a SSL server certificate. Get that working first, before tackling the client certificates.

Until some years ago, there was a rule "one port, one certificate". You could only run one single HTTPS website on a single port on the server, except in certain circumstances. The HTTPS port is 443, and using another port for the next SSL-secured domain means to get problems with firewalls and much harder linking - just using "https://example.org/" does not work anymore, you need some port number in it which you - as a visitor - don't know in advance.

The "special circumstances" were wildcard domains and the assumption that you only wanted to secure subdomains: app1.example.org, app2.example.org etc.

The problems lie in the foundations of SSL: SSL certificate exchange is being made before any HTTP protocol data are submitted, and since the certificate contains the domain name, you cannot deliver the correct certificate when you have several SSL hosts on the same port.

Server Name Indication

Fast-forward to now. We have SNI which solves the problem and gives nobody an excuse anymore to not have SSL secured domains.

With SNI, the browser does send (indicate) the host name it wants to contact during certificate exchange, which causes the server to return the correct certificate.

All current browsers on current operating systems support that. Older systems with Windows XP or OpenSSL < 0.98f do not support it and will get the certificate of the first SSL host.

Obtaining a server certificate

I assume you're going to get the certificate from CAcert.

First, generate a Certificate Signing Request with the CSR generator. Store the key file under

/etc/ssl/private/bookmarks.cweiske.de.key

Use the the .csr file and the CAcert web interface to generate a signed certificate. Store it as

/etc/ssl/private/bookmarks.cweiske.de-cacert.pem

Now fetch both official CAcert certificates (root and class 3) and put both together into

/etc/ssl/private/cacert-1and3.crt

Server configuration

A basic virtual host configuration with SSL looks like this:

ServerName bookmarks.cweiske.de

LogFormat "%V %h %l %u %t \"%r\" %s %b" vcommon

CustomLog /var/log/apache2/access_log vcommon

VirtualDocumentRoot /home/cweiske/Dev/html/hosts/bookmarks.cweiske.de

AllowOverride all

SSLEngine On

SSLCertificateFile /etc/ssl/private/bookmarks.cweiske.de-cacert.pem

SSLCertificateKeyFile /etc/ssl/private/bookmarks.cweiske.de.key

SSLCACertificateFile /etc/ssl/private/cacert-1and3.crt

]]>Apart from that, you might need to enable the SSL module in your webserver, i.e. by executing

$ a2enmod sslRestart your HTTP server. You should be able to request the pages via HTTPS now.

Make the server request client certificates

A web server does not require any kind of client certificate by default; this is something that needs to be activated.

The client certs may be required or optional, which leaves you the comfortable option to let users login normally via username/password or with SSL certificates - just as they wish.

Modify your virtual host as follows:

SSLVerifyClient optional

SSLVerifyDepth 1

SSLOptions +StdEnvVars

]]>There are several options you need to set:

- SSLVerifyClient optional

-

You may choose optional or require here. optional asks the browser for a client certificate but accepts if the browser (the user) does choose not to send any certificate. This is the best option if you want to be able to login with and without a certificate.

The setting require makes the web server terminate the connection when no client certificate is sent by the browser. This option may be used when all users have their client certificate set.

If you want to allow self-signed certificates that are not signed by one of the official CAs, use SSLVerifyClient optional_no_ca.

- SSLVerifyDepth 1

-

Your client certificate is signed by a certificate authority (CA), and your web server trusts the CA specified in SSLCACertificateFile. CA certificates itself may be signed by another authority, i.e. like

CAcert >> your own CA >> your client certificate

In this case, you have a higher depth. For most cases, 1 is enough.

- SSLOptions +StdEnvVars

-

This makes your web server pass the SSL environment variables to PHP, so that your application can detect that a client certificate is available and read its data.

In case you need the complete client certificate, you have to add +ExportCertData to the line.

This multiplies the size of data exchanged between the web server process and PHP, which is why it's deactivated most times.

If you restart your web server now, it will request a client certificate from you.

It may happen that the browser does not pop up the cert selection dialog. The web server may send a list of CAs that it considers valid to the browser. If the browser does not have a certificate from one of those CAs, it does not display the popup.

You can fix this issue by setting SSLCADNRequestFile or SSLCADNRequestPath .

Thanks to Gerard Caulfield for bringing this to my attention.

Logging

With Apache, you may use SSL client certificate details in your log files: Create a new log format and use the SSL client environment variables :

%{SSL_CLIENT_S_DN_Email}e %{SSL_CLIENT_M_SERIAL}e

Thanks to Hans Schou for this idea.

Adding client certificate support to your PHP application

Let's collect the requirements for SSL client cert support in a typical PHP application:

- Users get automatically logged in when they visit the page. To be able to do that, we need to know that a certificate belongs to a certain user account.

- Register a SSL certificate with an existing account. You also want to let users register multiple certificates because they probably have different ones at home and at work.

- When registering a new account, automatically associate the client certificate with the new account. This is the most unobtrusive way of getting clients to use SSL certificates.

- Let users remove associated client certificates. Their computer may be compromised or the certificate has just been lost (yes, you can revoke certificates, but that's another story).

- Don't bitch when users renew their certificate. Log them in as before.

Accessing certificate data

When a client certificate is available, the $_SERVER variable contains a bunch of SSL_CLIENT_* variables .

$_SERVER['SSL_CLIENT_VERIFY'] is an important one. Don't use the certificate if it does not equal SUCCESS. When no certificate is passed, it is NONE.

Another important variable is SSL_CLIENT_M_SERIAL with the serial that uniquely identifies a certificate from a certain Certificate Authority.

All variables with SSL_CLIENT_I_* are about the issuer, that is the CA. SSL_CLIENT_S_* are about the "subject", the user that sent the client certificate.

SSL_CLIENT_S_DN_CN for example contains the user's name - "Christian Weiske" in my case - which can be used during registration together with SSL_CLIENT_S_DN_Email to give a smooth user experience.

Always remember that you need to configure your web server to pass the variables to your PHP process.

Certificate identification

Associating a user account with a client certificate is brutally possible by just enabling +ExportCertData and storing the client certificate in your user database.

This is bad for two reasons:

- When the certificate expires and gets renewed, the user cannot login anymore.

- You need to pass more data to each PHP process and store more data than needed in your user database.

According to the PostgreSQL manual ,

The combination of certificate serial number and certificate issuer is guaranteed to uniquely identify a certificate (but not its owner — the owner ought to regularly change his keys, and get new certificates from the issuer).

So we can use SSL_CLIENT_M_SERIAL together with SSL_CLIENT_I_DN to uniquely identify a certificate, without storing its as a whole.

A "renewed" certificate is in reality a new certificate with a new serial number. Thus the serial changes after renewal.

The combination of issuer + SSL_CLIENT_S_DN_Email should be more stable; letting the user login even when he renewed his certificate - but only if he didn't change his email address.

SemanticScuttle uses the following code to check if a certificate is valid:

Storing user certificates

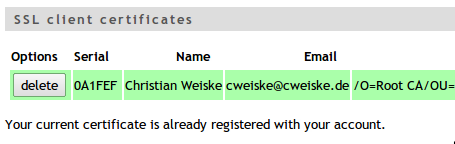

While you can calculate a hash over serial and issuer DN, it's better to store each of the values separately in the database. This has the advantage that you may display them in the user's certificate list, and allow him to identify the cert later on.

Here is the database table structure of SemanticScuttle:

Apart from the two required columns, we also store the subject's name and email to again allow better certificate identification:

Certificate list in SemanticScuttle

Registering certificates

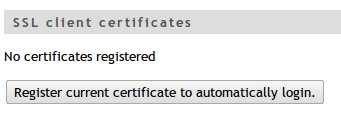

The user needs to be able to manually register his current certificate with the application. Automatic registration without confirmation may not be desired, so the best is to just drop that idea.

SemanticScuttle offers a "register current certificate" button on the user profile page:

No certificates and a registration button in SemanticScuttle

Another option that improves usability is to associate the client certificate upon user registration. There should be a checkbox which alerts the user of that detail and allows him to disable it.

The following code registers the current certificate with the user's account in SemanticScuttle:

getTableName()

. ' '. $this->db->sql_build_array(

'INSERT', array(

'uId' => $userId,

'sslSerial' => $_SERVER['SSL_CLIENT_M_SERIAL'],

'sslClientIssuerDn' => $_SERVER['SSL_CLIENT_I_DN'],

'sslName' => $_SERVER['SSL_CLIENT_S_DN_CN'],

'sslEmail' => $_SERVER['SSL_CLIENT_S_DN_Email']

)

);

]]>You might want to store the certificate's expiration date and automatically remove them when it is reached.

OCSP checks

The Online Certificate Status Protocol allows you to check if a certificate has been revoked. Revocation is useful if your certificate has been compromised somehow; it could be stolen or the password gotten public.

A certificate contains information about the CA's OCSP server, you can view it by running

$ openssl x509 -text -in /path/to/cert.pem

...

Authority Information Access:

OCSP - URI:http://ocsp.cacert.org

...This information is not available in the standard SSL environment variables but need to be extracted from the certificate data, which means that +ExportCertData needs to be enabled.

PHP's OpenSSL extension does not have a method to generate, send or evaluate OCSP requests, so checking the certificate is only possible with the openssl ocsp commandline tool or by implementing it yourself.

The command line tool is easy to use after you stored the client certificate on disk. At first we need the URL

$ openssl x509 -in /tmp/client-cert.pem -noout -text |grep OCSP

OCSP - URI:http://ocsp.cacert.org

Now that the URL is ours, it can be queried:

$ openssl ocsp -CAfile /etc/ssl/private/cacert-1and3.crt\

-issuer /etc/ssl/private/cacert-1and3.crt\

-cert /tmp/client-cert.pem\

-url http://ocsp.cacert.orgExample output of a revoked certificate:

At the time of writing, there sadly does not seem to be any PHP library that eases verifying SSL client certificates.

Let the HTTP server do the work

Apache since version 2.3 is able to do the OCSP checks itself if you activate the SSLOCSPEnable option.

Links

- Server Name Indication

- Apache's mod_ssl

- PHP's OpenSSL extension

- Secure client authentication with php-cert-auth (2010-09-28) - introduces a PHP class that's not really worth using since it always requires full SSL certificate data and does not give you goodies like OCSP checks. Better not use it.

- X.508 PKI login with PHP and Apache (2008-05-30) - similar to, if not as comprehensive as, this article.

- Hans Schou made an introduction video based on this blog post.