Instead of writing our own search, we managed to integrate REST API data

into a TYPO3's native indexed_search results.

This brings us a mix of website content and REST data

in one result list.

A TYPO3 v7.6 site at work consists of

a normal page tree with content that is searchable with

indexed_search.

A separate management interface is used by editors to administrate

some domain-specific data outside of TYPO3.

Those data are available via a REST API, which is utilized by one of our

TYPO3 extensions to display data on the website.

Those externally managed data should now be searchable on the TYPO3

website.

Integration options

I pondered a long time how to tackle this task.

There were two approaches:

-

Integrate API data into indexed_search, so that they appear inside the

normal search result list.

-

Have separate searches for website content and API content.

The search result list would have two tabs, one for each type.

An indicator would show how many results are found for each type

and the user would need to switch between them.

The second option looked easier at first because it does not require

one to dig into indexed_search.

But after thinking long enough I found that I would be replicating all

the basic features needed for search: Listing data, paging,

and those tabs as well.

The customer would then also demand that we'd have an overview page

showing the first 3 results from each of the types, with a

"view all" button.

In the end I decided to use option #1 because it would feel most integrated

and would mean less code.

How indexed_search + crawler work together

At first I have to recommend

Indexed Search & Crawler - The Missing Manual

because it explains many things and helps with basic setup.

URL list generation

You may create crawler configurations and indexed_search configurations

in the TYPO3 page tree.

Both are similar, yet different. How do they work together?

-

The crawler scheduler task and command line script both start

crawler_lib::CLI_run().

-

cli_hooks are executed.

indexed_search has registered its IS\CrawlerHook as one,

and that is started.

-

All indexing configuration records are checked for their next execution

time.

If one of them needs to be run, it is put into crawler queue

as a callback that runs IS\CrawlerHook again.

-

The crawler queue is processed and calls

IS\CrawlerHook::crawler_execute().

-

IS\CrawlerHook::crawler_execute_type4() gets an URL list

via crawler_lib::getUrlsForPageRow().

-

Crawler configuration records are searched in the rootline.

-

URLs are generated from the configurations found

(crawler_lib::compileUrls())

-

URLs are queued with crawler_lib::urlListFromUrlArray()

Note that the crawler only processes entries that

were in the queue when it started.

Queue items added during the crawl run are not processed yet,

but in a later run.

This means that it may take 6 or 7 crawler runs until it gets

to your page with the indexing and crawler configuration.

It's better to use the backend module

Info -> Site crawler

to enqueue your custom URLs during development, or have

a minimal page tree with one page :)

Crawler URLs

Crawler configuration records

are URL generators.

Without special configuration, they return the URL for a page ID.

Pretty dull.

The crawler manual shows that they can be used for more, and gives a

language configuration as example: &L=[1-3|5|7].

For each page ID this will generate 5 URLs, one for each of the listed

languages 1, 2, 3, 5 and 7.

Apart from those value ranges, you may specify

a _TABLE configuration

:

&myparam=[_TABLE:tt_myext_items;_PID:15, _WHERE: and hidden = 0]

This is where we need to step in: We may handle those [FOO] values

and expand them ourselves with a hook:

ext_localconf.php

$GLOBALS['TYPO3_CONF_VARS']['SC_OPTIONS']['crawler/class.tx_crawler_lib.php']

['expandParameters'][] = \Vnd\Ext\UrlGenerator::class . '->expandParameters';

The hook gets called for every bracketed URL parameter value.

$params['currentValue'] contains the value without brackets.

The code in the hook method only has to expand the value to a list of

IDs and set that into $params['paramArray'][$key]:

* @see TYPO3\CMS\IndexedSearch\Example\CrawlerHook

*/

class UrlGenerator

{

/**

* Add GET parameters to crawler page.

*

* This method is registered as hook for crawler/class.tx_crawler_lib.php

* and is called when crawler configuration "Configuration" fields

* are expanded (`&L=[1-3]&bar=[FOO]`).

*

* @param array $params Keys:

* - &pObj

* - ¶mArray

* - currentKey

* - currentValue

* - pid

* @param object $pObj Crawler lib instance

*

* @return void

*/

public function expandParameters(&$params, $pObj)

{

if ($params['currentValue'] === 'FOO') {

//replace this with your own ID generation code

$params['paramArray'][$params['currentKey']] = [11, 23, 42];

}

}

}

?>]]>

Now when the crawler processes page id 1 and finds a matching

configuration record that contains the following configuration:

&tx_myparam=[FOO]

our hook will be called and expand that config to three IDs:

/index.php?id=1&tx_myparam=11

/index.php?id=1&tx_myparam=23

/index.php?id=1&tx_myparam=42

Crawler will then put three URLs into the queue and index them

in the next run.

The page and the plugin that show the API data must be cachable.

Data are not indexed otherwise.

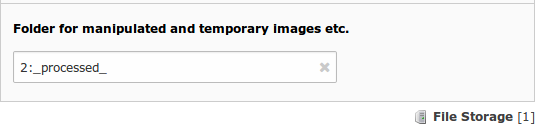

Also make sure you set the

page title for indexing.

Enable cHash generation in the crawler configuration.

Cache invalidation

When a visitor uses the website search and indexed_search generates

a search result set,

it checks if the page ID is still available.

Deactivated and deleted pages will thus not show up in the results.

This does not work for API results for obvious reasons.

TYPO3 database records integrated into search with an

indexed_search configuration get

removed on the next crawler run.

Until then, they are still findable:

In fact, if a record is removed its indexing entry will also be

removed upon next indexing - simply because the "set_id" is used to

finally clear out old entries after a re-index!

This works as follows:

-

The indexing configuration for records is executed and the records are

indexed.

Both config ID and set_id (timestamp of index config run) are

saved with the search index records.

-

When the index configuration is run the next time, new search index entries

will be created for all records - with a new set_id.

There are two search index records for each page now.

-

Once the index configuration is fully processed

(no URLs for that config ID in the queue anymore),

the search index entries with the same index config ID and old

set_ids are removed.

This also works for API data.

The indexing configuration "pagetree" processes the API page ID, which

in turn creates the API detail URLs through the crawler configuration.

After reindexing the data, their old search index data get deleted.

The only thing to remember is not to use a "Crawler Queue"

scheduler task, because then the phash records will have no index

configuration ID, and thus will not be deleted on the next run.

Development

The "reset all index data" SQL script in invaluable during development:

TRUNCATE TABLE index_debug;

TRUNCATE TABLE index_fulltext;

TRUNCATE TABLE index_grlist;

TRUNCATE TABLE index_phash;

TRUNCATE TABLE index_rel;

TRUNCATE TABLE index_section;

TRUNCATE TABLE index_stat_search;

TRUNCATE TABLE index_stat_word;

TRUNCATE TABLE index_words;

TRUNCATE TABLE tx_crawler_process;

TRUNCATE TABLE tx_crawler_queue;

UPDATE index_config SET timer_next_indexing = 0;